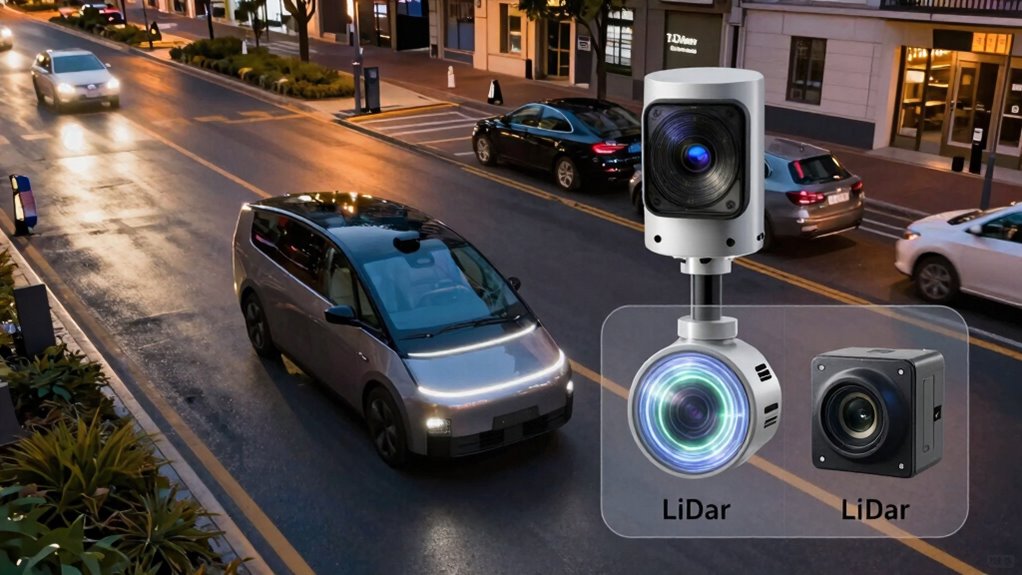

LiDAR and cameras serve different roles in autonomous navigation. LiDAR provides precise 3D mapping and works well in various lighting conditions but struggles in fog or rain. Cameras capture rich visual details like textures and colors but are limited in low light and bad weather. Combining both sensors enhances reliability, especially in challenging environments. If you want to understand how these systems complement each other and their real-world trade-offs, you’ll find valuable insights ahead.

Key Takeaways

- LiDAR provides highly accurate 3D mapping but struggles in fog, rain, or snow, while cameras excel at recognizing visual details but perform poorly in low light.

- Sensor fusion combines LiDAR and camera data to improve perception, compensating for individual sensor limitations in adverse conditions.

- LiDAR is more costly, bulky, and complex to calibrate, whereas cameras are affordable, lightweight, and easier to integrate.

- Cameras excel at interpreting textures, colors, and complex scenes, essential for visual understanding and scene analysis.

- Effective navigation relies on integrating both sensors, leveraging their strengths to achieve reliable perception across diverse environments.

TOFFUTURE XT-M60 Flash Lidar 15m Detect Range 120°x45°FOV Pure Solid Lidar, Advanced High Sensitivity & Aimbient Light Surpression ToF Technology Lidar Sensor for Autonomous Vehicles Robotics IOT

✅ Eliminate Dead Zones: XT-M60 flash LiDART can detect obstacles within 15 meters (at 50% reflectivity) using advanced…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How Do LiDAR and Cameras Perceive the Environment Differently?

LiDAR and cameras process their surroundings in fundamentally different ways. LiDAR uses laser pulses to create precise, three-dimensional point clouds, allowing you to build detailed environmental maps even in low-light conditions. Cameras, on the other hand, capture visual images, relying on light and color information to interpret your environment. Sensor fusion combines data from both, enhancing environmental mapping by integrating depth with visual cues. This combination helps you better understand complex scenes and detect obstacles. LiDAR’s strength lies in accurate distance measurement, while cameras excel at recognizing textures and colors. By understanding these differences, you grasp how each sensor contributes uniquely to perception, enabling autonomous systems to navigate safely and efficiently. Sensor fusion leverages the strengths of both sensors, creating a more comprehensive understanding of the environment.

YOVDA 1080P Dual Camera Dash Cam for Cars, Driving Recorder with IR Night Vision, Loop Recording, Wide Angle Lens – 3.16 Inch IPS Screen,Parking Mode, Car Camera with 32GB TF Card

【 1080P Front Camera and In-Car Camera】YOVDA offers HD in-car recorder built-in dual-channel system that provides 150° front…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What Are the Strengths and Weaknesses of LiDAR for Autonomous Vehicles?

Have you ever wondered why LiDAR is considered a critical sensor for autonomous vehicles? Its main strength is providing highly accurate 3D mapping of the environment, essential for precise navigation. However, LiDAR’s effectiveness depends heavily on proper sensor calibration; misalignment can lead to errors in perception. Its ability to operate in various lighting conditions is a plus, but it struggles in heavy rain, fog, or snow. Data fusion plays a crucial role, combining LiDAR with other sensors like cameras to improve reliability and fill gaps. Despite its strengths, LiDAR is often expensive and can be bulky, limiting integration in some vehicles. Understanding these strengths and weaknesses helps you appreciate why many autonomous systems rely on a balanced sensor suite for safe and effective navigation. Additionally, advancements in sensor technology like digital frames and interactive murals showcase how evolving technology can influence perception and design in various fields. As research progresses, cost reduction strategies are emerging to make LiDAR more accessible and practical for wider adoption.

Sensor Fusion Approaches for Positioning, Navigation, and Mapping: How Autonomous Vehicles and Robots Navigate in the Real World: With MATLAB Examples

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How Do Cameras Capture Visual Data for Self-Driving Cars?

Cameras in self-driving cars capture visual data by using sensors that detect light and convert it into digital images. Different types of sensors, like RGB and infrared, help the vehicle interpret various conditions and objects. Once the images are collected, advanced processing techniques analyze the data to identify obstacles, lanes, and signs.

Image Capture Process

To capture visual data for self-driving cars, high-resolution sensors work tirelessly to record the environment in real-time. Your camera systems use precise sensor calibration to guarantee accurate image capturing, adjusting for lens distortion and alignment. These cameras send continuous streams of visual information, which get integrated through data fusion with other sensors like LiDAR for a thorough view. The process involves converting light into electrical signals, then processing these signals into digital images. You’ll find that the cameras focus on key areas such as object detection, lane markings, and traffic signs. The data is then synchronized with other sensor inputs, enabling your vehicle to interpret its surroundings accurately and react swiftly. This seamless process is vital for safe, reliable autonomous navigation.

Types of Camera Sensors

Different types of camera sensors play a key role in how self-driving cars perceive their environment. These sensors capture visual data that helps the vehicle understand surroundings, identify obstacles, and make decisions. Common sensor types include CCD (Charge-Coupled Device) and CMOS (Complementary Metal-Oxide-Semiconductor). They differ in sensitivity, speed, and power consumption. Proper sensor calibration guarantees accurate data, essential for effective perception. Data fusion combines inputs from multiple sensors, improving reliability and robustness. Additionally, understanding the sensor characteristics can aid in selecting the most suitable cameras for specific autonomous driving applications.

Data Processing Techniques

Self-driving cars rely on advanced data processing techniques to transform raw visual input into meaningful information. Cameras capture vast amounts of visual data, which require careful sensor calibration to guarantee accuracy. The process involves aligning camera images with other sensors, such as LiDAR, through data fusion, creating an all-encompassing view of the environment. Image processing algorithms identify objects, lanes, and signs, filtering out noise and enhancing clarity. Techniques like stereo vision help estimate distances, while deep learning models interpret complex scenes. Data fusion combines visual data with inputs from radar and LiDAR, improving reliability. Proper sensor calibration ensures consistent performance, and data fusion integrates multiple data sources, providing a robust foundation for decision-making in autonomous navigation.

Dxtvate D500 LiDAR Kit with 360° Laser Scanning, 12m Range, High Precision for SLAM, Robotics, UAV and 3D Mapping Applications

【360° High-Speed Laser Scanning】:Equipped with advanced DTOF (Direct Time-of-Flight) technology, the D500 LiDAR performs 360° rotating laser scanning…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Performance of LiDAR and Cameras in Low-Light and Bad Weather

LiDAR and cameras face significant challenges when operating in low-light conditions or during bad weather, affecting their ability to perceive the environment accurately. In low-light scenarios, cameras struggle with limited visibility, which can be mitigated through proper sensor calibration, but noise and graininess often persist. LiDAR, however, generally performs better since it relies on laser pulses rather than ambient light. Bad weather like rain, fog, or snow further hampers both sensors: rain can distort LiDAR signals, while fog scatters camera images. To maintain reliable perception, data fusion becomes essential, combining sensor outputs to compensate for individual weaknesses. Proper sensor calibration guarantees that data from both sources aligns correctly, improving the overall robustness of navigation systems in adverse conditions. Additionally, microplastics in dust can contribute to sensor contamination, impacting sensor accuracy and necessitating regular maintenance.

Cost, Complexity, and Scalability of LiDAR vs. Camera Systems

When comparing LiDAR and camera systems, you’ll notice significant differences in cost efficiency, with cameras generally being more affordable upfront. The complexity of integrating and maintaining these systems varies, often making LiDAR more intricate and expensive to operate. Scalability also presents challenges, as adding more sensors or expanding coverage can quickly escalate costs and technical hurdles for both options.

Cost Efficiency Differences

While both LiDAR and camera systems aim to provide accurate environmental perception, their cost efficiency differences are significant. LiDAR systems tend to be more expensive upfront due to costly sensors and complex calibration processes. Cameras are generally cheaper but may require extensive data fusion and sensor calibration to match LiDAR’s accuracy. You’ll find that:

- LiDAR sensors have higher initial costs but lower ongoing maintenance.

- Cameras are more affordable but need supplemental sensors for reliable perception.

- Data fusion adds complexity and costs, especially with camera systems.

- Scalability favors cameras, as they’re easier and cheaper to deploy in large quantities.

- Long-term expenses for LiDAR include sensor replacement and calibration, whereas cameras mostly involve software updates.

Understanding these factors helps you evaluate which system offers better cost efficiency for your application.

System Complexity Levels

Understanding the system complexity of LiDAR and camera setups is essential for evaluating their practicality and scalability. LiDAR systems tend to be more complex due to the need for precise sensor calibration to guarantee accurate depth measurements. This calibration process can be time-consuming and requires ongoing adjustments. Cameras, on the other hand, involve less hardware complexity but demand sophisticated data fusion algorithms to interpret visual information reliably. Combining sensor data from multiple sources increases system complexity, especially when integrating LiDAR and cameras for seamless navigation. While LiDAR offers straightforward 3D mapping, its hardware and calibration needs add to costs and maintenance. Cameras are simpler hardware-wise but require advanced processing to make sense of visual data, impacting overall system complexity.

Scalability Challenges

Scaling LiDAR and camera systems presents distinct challenges in cost, complexity, and deployment. LiDAR’s high sensor costs and intricate calibration processes can quickly inflate budgets. Camera systems, while cheaper, demand extensive data fusion algorithms to interpret visual data accurately, especially under varying conditions. Maintaining sensor calibration across multiple units adds another layer of difficulty, impacting system reliability. Additionally, integrating sensors for seamless operation requires sophisticated hardware and software solutions. Consider these points:

- High sensor costs for LiDAR limit large-scale deployment

- Complex sensor calibration processes affect performance

- Data fusion challenges with camera systems under diverse environments

- Increasing hardware complexity for multi-sensor integration

- Scalability depends on balancing cost, calibration, and data processing needs

Both approaches face hurdles, but understanding these challenges helps you choose the right system for your needs. Utilizing landscaping techniques can also improve system performance by optimizing sensor placement and environmental conditions.

When Is Combining LiDAR and Camera Data Most Effective?

Combining LiDAR and camera data becomes most effective when you need a thorough understanding of your environment, especially in challenging conditions. Sensor fusion allows you to merge the strengths of both sensors, providing more reliable perception. This approach is particularly valuable in scenarios with poor lighting, fog, or rain, where one sensor might struggle. By leveraging data redundancy, you guarantee that critical information is still available even if one sensor’s data becomes unreliable. Using both technologies together enhances object detection, classification, and environment mapping. This integration reduces blind spots and improves accuracy, making your system more robust. Overall, combining LiDAR and camera data optimizes perception, especially in complex or unpredictable environments where relying on a single sensor could lead to errors. Additionally, understanding cybersecurity tactics related to sensor systems can help protect these integrated solutions from potential threats. Incorporating sensor reliability assessments can further ensure consistent performance across diverse conditions.

Common Misconceptions About LiDAR and Camera Technologies

Many people believe that LiDAR and camera technologies are interchangeable or that one can fully replace the other. This misconception overlooks their unique strengths and limitations. For example, sensor calibration is essential for both systems to work accurately, but it’s often underestimated. People also assume data fusion is straightforward, when in reality, combining LiDAR and camera data requires complex algorithms and precise calibration to avoid errors. Some believe cameras work well in all lighting conditions, ignoring LiDAR’s robustness in low-light or foggy environments. Others think LiDAR is always more accurate, but it can struggle with reflective surfaces. Additionally, sensor calibration is a critical step that impacts the overall reliability of both systems, and neglecting it can lead to significant perception errors. Properly aligning sensors is crucial for achieving accurate data integration and minimizing discrepancies between the sensors. Furthermore, data fusion involves careful synchronization and integration to ensure the systems complement each other effectively. Ultimately, many overlook how integrating both sensors provides a more reliable perception system, emphasizing that neither technology alone offers a complete solution. Proper sensor calibration is crucial for achieving accurate results in both systems.

How to Choose Between LiDAR and Cameras for Your Autonomous Project

Choosing between LiDAR and cameras for your autonomous project hinges on understanding the specific environment and operational requirements. If your project demands precise distance measurements and operates in low-light or challenging weather conditions, LiDAR offers reliable data with high accuracy. Conversely, cameras excel in recognizing textures, colors, and complex scenes, making them ideal for detailed visual understanding. To optimize performance, consider sensor fusion—combining both sensors to leverage their strengths and compensate for weaknesses. This approach provides data redundancy, ensuring the system remains functional even if one sensor fails or encounters limitations. By evaluating factors like environmental conditions, processing capabilities, and safety needs, you can make a more informed choice tailored to your autonomous application’s specific demands.

Frequently Asked Questions

How Do Lidar and Cameras Handle Dynamic Objects Differently?

You’ll find that LiDAR detects dynamic objects through precise 3D point clouds, capturing their shape and movement effectively. Cameras handle object detection by analyzing visual cues like color and texture. Data fusion combines both sensors’ strengths, improving accuracy. While LiDAR excels in low-light conditions, cameras provide contextual details. Together, they offer an all-encompassing understanding of dynamic objects, making navigation safer and more reliable.

What Are the Maintenance Requirements for Lidar Versus Cameras?

You’ll find that lidar requires less frequent sensor calibration, making maintenance a breeze, while cameras demand regular checks to keep image quality sharp. Ironically, lidar’s minimal calibration saves power, whereas cameras might sip more energy during constant adjustments. So, if you enjoy the simplicity of fewer calibration headaches and lower power needs, lidar’s your friend. But if constant upkeep doesn’t bother you, cameras will keep your system visually sharp.

Can Lidar and Cameras Operate Effectively Together in All Environments?

Yes, LiDAR and cameras can work effectively together across many environments. You just need to account for sensor calibration regularly to guarantee data accuracy and take into account environmental adaptability. While cameras excel in well-lit conditions, LiDAR performs better in low-light or adverse weather. Combining both offers a more complete perception system, but be mindful that certain extreme environments might challenge their joint effectiveness without proper calibration and adjustments.

How Long Do Lidar Sensors Typically Last Compared to Cameras?

LiDAR sensors typically last longer than cameras, often around 5 to 10 years, depending on usage and maintenance. You need to perform regular sensor calibration to keep them accurate. Weather resilience also impacts lifespan; LiDAR generally handles harsh conditions better than cameras, which are more sensitive to dust, rain, or fog. Proper care and maintenance can extend the life of both, ensuring reliable performance over time.

Are There Safety Concerns Specific to Lidar or Camera Failures?

Yes, sensor failures pose safety concerns. If a lidar sensor or camera malfunctions, your vehicle’s navigation could be compromised. Regular sensor calibration is essential to maintain accuracy, especially after impacts or software updates. Weather resilience varies; lidar can struggle in fog or heavy rain, while cameras may be affected by dirt or low light. Ensuring proper maintenance helps mitigate risks and keeps your sensors reliable in different conditions.

Conclusion

Ultimately, choosing between LiDAR and cameras is like selecting a lighthouse or a mirror for your autonomous journey. LiDAR shines bright in the dark, guiding through fog with unwavering precision. Cameras reflect the world’s vibrant details, capturing life in every hue. By understanding their unique lights, you can craft a navigation system that’s not just reliable but a beacon of safety, illuminating the path ahead with clarity and confidence.