Many automation predictions have fallen short due to overhyped claims and hidden flaws. For example, AI in healthcare often promises near-perfect accuracy but struggles in real-world settings with poor data quality and technical issues. Automated hiring tools have biased outcomes, favoring certain racial and gender groups. Self-driving cars face sensor and software limitations that threaten safety. Facial recognition systems are less accurate outside labs, and predictive analytics can give false confidence. Discover the reasons behind these failures and what you should watch for next.

Key Takeaways

- AI in healthcare often overpromises accuracy, leading to failures like Google’s retinopathy tool due to poor data quality and environmental issues.

- Automated hiring tools exhibit racial and gender biases, undermining fairness and leading to mistrust among diverse job seekers.

- Autonomous vehicle predictions failed as sensor and software limitations caused misinterpretations and accidents in complex environments.

- Facial recognition systems claimed near-perfect accuracy but underperformed in real-world conditions, especially for minority groups.

- Overconfidence in predictive analytics resulted in significant errors during high-stakes decisions, exemplified by flawed election polling models.

AI in Healthcare Technology: Diagnostic Tools for the Digital Age

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Overhyping AI in Healthcare Diagnostics

Despite claims of near-perfect accuracy, AI in healthcare diagnostics often falls short in real-world settings. You might have heard that AI systems outperform humans, but studies show 94% of these tools are less accurate than a single radiologist in controlled tests. High-profile failures, like Google’s retinopathy detection in Thailand, reveal issues like poor lighting, low image quality, and high rejection rates—up to 21%. When deployed in hospitals, AI models often perform worse than in labs, hampered by inconsistent data and technical delays that slow workflows. Vendors frequently claim near-perfect accuracy, but independent tests expose significant gaps, undermining trust. Overhyping AI without considering real-world limitations creates false expectations and risks patient safety, making it clear that AI’s promise often outpaces its actual performance. Real-world performance remains a major hurdle, as models struggle to adapt to diverse clinical environments and patient populations. Additionally, the variability in clinical data can significantly impact the effectiveness of AI models, highlighting the importance of data quality in deployment success.

uAttend Touch-Free Voice Control and Facial Recognition Time Clock System for Employees and Small Businesses (DR2000)

Touch-Free, Cloud-Connected Time Clock: The uAttend DR2000 offers a modern, touch-free solution for employee time tracking, keeping your…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Biases Hidden in Automated Hiring Tools

Automated hiring tools often claim to streamline recruitment processes and eliminate human bias, but in reality, they can perpetuate and even amplify societal prejudices. Research from the University of Washington shows AI models favor names linked to white candidates 85% of the time, while female-associated names are favored only 11%. Black male names are rarely favored over white male names, revealing deep gender and racial biases rooted in training data and design choices. Many AI systems lack transparency due to proprietary restrictions, making bias detection difficult. Nearly half of job seekers distrust AI’s fairness, especially among minorities. These biases can unfairly exclude qualified candidates from underrepresented groups, worsening diversity issues. Without proper oversight, AI tools risk reinforcing discrimination rather than improving fairness in hiring. Incorporating music therapy benefits and other holistic approaches could help address some of these underlying biases by fostering more inclusive environments.

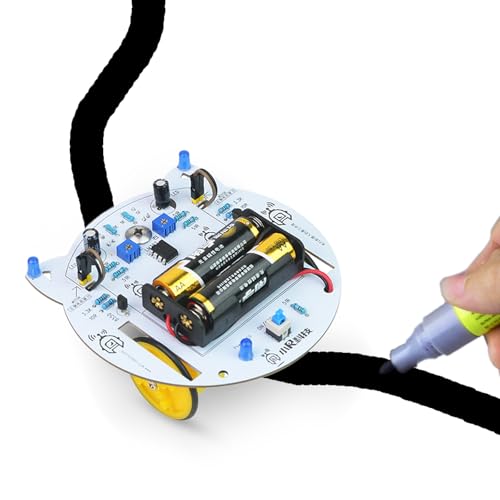

Soldering Practice Kit, Line Following Robot Car for Robotic Smart Robot Car Kit Line Tracking Car Kit Science Kits Hands-on Project for Learning Basic Soldering(Batteries not Included)

Smart Line Tracking Robot Car: Equipped with advanced sensors, this robot car autonomously follows lines with precision, perfect…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Challenges of Ensuring Autonomous Vehicle Safety

Ensuring the safety of autonomous vehicles presents a complex challenge because these systems must reliably interpret dynamic and unpredictable road environments. Limited sensor capabilities can cause misinterpretations, especially in complex situations, leading to accidents. Software errors and hardware malfunctions also pose risks, undermining system reliability. Machine learning limitations mean these vehicles might misjudge scenarios unfamiliar from training data, increasing crash chances. Human-machine interaction adds another layer of difficulty, especially during handovers. To understand these issues better, consider the table below:

| Challenge | Impact | Example |

|---|---|---|

| Sensor Limitations | Misinterpretation of surroundings | Inability to detect sudden obstacles |

| Software Errors | Malfunctions and crashes | Faulty decision-making |

| Machine Learning Limits | Misjudging complex scenarios | Unexpected reactions in new environments |

| Human Interaction | Handover confusion | Delayed responses, accidents |

Furthermore, ongoing research aims to address these limitations by improving sensor technology, advancing software robustness, and enhancing human-machine interfaces. Additionally, efforts are underway to develop more reliable algorithms that can better adapt to unforeseen circumstances.

WINNING THE SOFTWARE QUALITY ASSURANCE JOB INTERVIEW: A powerful compilation of real world interview questions and answers for manual and automated software quality assurance positions.

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Fallacy of Accuracy in Facial Recognition

Many claims of near-perfect accuracy in facial recognition systems are overstated because they rely heavily on ideal testing conditions that rarely match real-world environments. In labs, top algorithms boast accuracy rates over 99.97%, often with high-quality images and minimal environmental variation. However, real-world factors like poor lighting, angles, and occlusions considerably reduce reliability. Additionally, demographic biases persist; systems perform less accurately on Black individuals, leading to unfair errors. Despite advances, spoofing remains a threat, and sophisticated attacks can bypass anti-spoofing measures. Ageing and environmental changes further degrade performance over time. Research shows these discrepancies reveal that high accuracy claims are often based on controlled tests, not the unpredictable conditions faced in everyday applications, undermining confidence in facial recognition’s reliability.

Overconfidence in Predictive Analytics and System Failures

Despite the promise of high accuracy, predictive analytics often fall short because organizations rely too heavily on models that cannot account for all real-world complexities. Overconfidence in these models leads to overlooked limitations and unexpected failures. You might trust a model’s output without questioning its assumptions, but unknown variables and changing conditions can cause significant errors. The 2016 US election polling failures proved that even sophisticated big-data models can drastically underestimate probabilities. Limitations of Predictive Models Data quality issues like errors and outdated info undermine predictions. Integration challenges from disparate sources affect accuracy. Signature identification for failure detection remains unreliable. Organizational skepticism hampers user trust and adoption. Additionally, a lack of ongoing model validation can cause organizations to miss warning signs before issues escalate.

Frequently Asked Questions

How Can AI Be Designed to Avoid Overpromising in Healthcare?

You can avoid overpromising AI in healthcare by setting realistic expectations upfront. Clearly communicate AI’s capabilities and limitations, emphasizing it as a tool to support, not replace, clinicians. Focus on addressing specific, proven pain points rather than promising revolutionary outcomes. Use evidence-based evaluations to demonstrate real benefits, and avoid hype that inflates what AI can accomplish. This approach builds trust and ensures stakeholders understand AI’s true potential and boundaries.

What Methods Exist to Detect and Correct Bias in AI Hiring Tools?

You might think AI hiring tools are unbiased, but they often aren’t. To detect bias, you can use regression analysis, controlled experiments, and compare AI decisions with human ones. Correct biases through data re-sampling, anonymization, and algorithm adjustments. Regular audits and inclusive training data help guarantee fairness. By actively monitoring and updating these systems, you build trust and create a more equitable hiring process for everyone.

How Do Autonomous Vehicles Handle Unpredictable Real-World Scenarios Safely?

You wonder how autonomous vehicles handle unpredictable real-world scenarios safely. They rely on advanced sensors and machine learning algorithms to interpret their environment and make decisions quickly. However, these systems can struggle with unexpected situations like sudden pedestrian movements. To improve safety, they also include human oversight and fail-safe mechanisms, but challenges remain, especially in complex or unusual scenarios where real-world unpredictability tests their limits.

Why Is Accuracy Alone Insufficient to Evaluate Facial Recognition Systems?

You might think accuracy alone shows how good a facial recognition system is, but it doesn’t tell the full story. Factors like lighting, pose, and image quality vary in real life, impacting performance. Accuracy also ignores different error types, fairness across demographics, and speed. To truly evaluate a system, you need additional metrics that address bias, robustness, and operational conditions, not just raw accuracy numbers.

What Strategies Improve Resilience Against Systemic Failures in Automated Systems?

You might think automation failures are inevitable, but proven strategies can bolster resilience. Implement redundancy and flexible system designs that allow quick adjustments during faults. Regular maintenance and monitoring catch issues early, while layered security and incident response plans prepare you for cyber threats and failures. Training your team in error management ensures quick recovery from mistakes. Combining these approaches creates a robust system that withstands unexpected disruptions and maintains continuous operation.

Conclusion

Don’t assume automation always delivers perfect results. While AI promises innovation, it’s easy to overlook its limitations and pitfalls. Instead of blindly trusting these systems, stay informed and critical. If you think automation is foolproof, remember these failures—and how they happened. By understanding what went wrong, you can better navigate the future of AI, avoiding costly mistakes and making smarter decisions in your own work and life.